Many other processing models are available for the 3.x version of Hadoop.įor more information, refer to the official Apache Hadoop documentation. It distributes work within the cluster or map, then organizes and reduces the results from the nodes into a response to a query. MapReduce - is the original processing model for Hadoop clusters.YARN - short for Yet Another Resource Negotiator, is the “operating system” for HDFS.

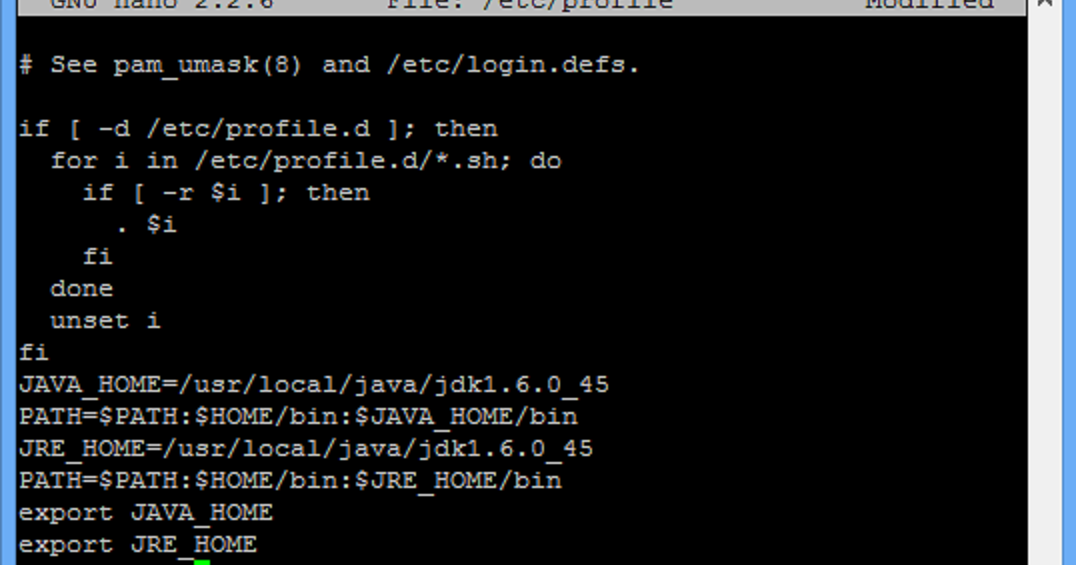

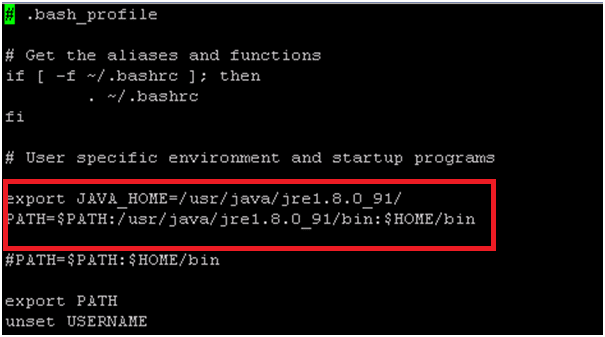

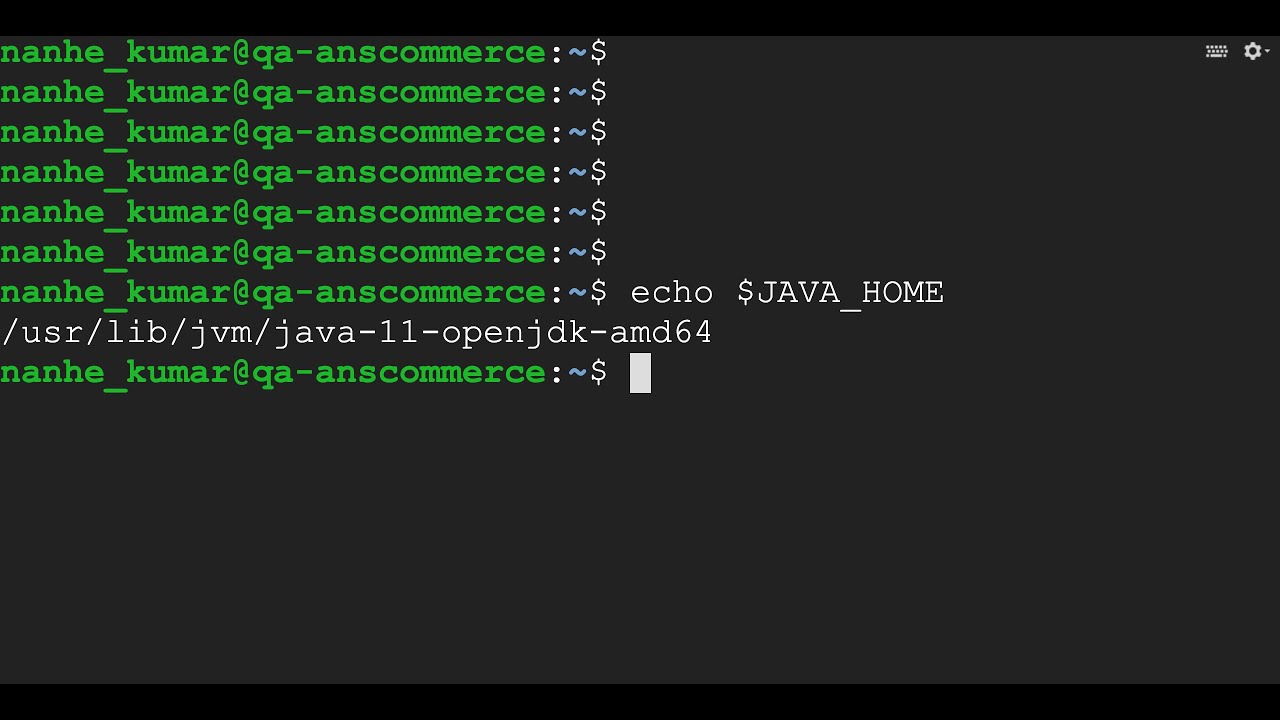

HDFS - stands for Hadoop Distributed File System, is responsible for persisting data to disk.Hadoop Common - is the collection of utilities and libraries that support other Hadoop modules.Hadoop clusters are relatively complex to set up, so the project includes a stand-alone mode which is suitable for learning about Hadoop, performing simple operations, and debugging. That way, in the event of a cluster node failure, data processing can still proceed by using data stored on another cluster node. Fault Tolerance: Hadoop ecosystem has a provision to replicate the input data on to other cluster nodes.Scalability: Hadoop clusters can easily be scaled to any extent by adding additional cluster nodes.This is called the “data locality concept”, and helps increase the efficiency of Hadoop-based applications. By default, Ubuntu 22. Hadoop processes the logic (not the actual data) that flows to the computing nodes, thus consuming less network bandwidth. One option for installing Java is to use the version packaged with Ubuntu.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed